The current wave of AI video tools has made creation more accessible, but it has also introduced a new kind of friction. Many platforms promise impressive visual results, yet the real experience often feels fragmented: one tool for draft motion, another for cleaner detail, another for style consistency, and often a separate workflow for comparing outputs. That is why platforms built around strong model selection matter. In that context, Seedance 2.0 is worth paying attention to, not simply because it can generate video, but because it sits inside a broader workflow that tries to make model choice part of the creative process rather than an afterthought.

What stood out to me on the official pages is that the platform is not framed as a single-purpose generator. Instead, it presents video creation as a practical sequence: choose a model, decide whether the input starts from text or from an image, generate variations, and compare the results in one place. That sounds simple, but in practice it changes the user experience. Instead of forcing creators to commit too early to one model philosophy, the platform treats model selection as a flexible decision shaped by the project itself.

That distinction matters because different video ideas fail in different ways. A product demonstration may need cleaner motion and consistency. A short narrative clip may need stronger scene transitions. A stylized concept video may benefit from a more interpretive visual engine. The official positioning suggests that this is exactly where the platform sees its value: not only in producing clips, but in helping users understand which model is more suitable for a given creative brief.

The broader AI video conversation often focuses on quality in the abstract, but creators rarely work in the abstract. They work under deadlines, budgets, format requirements, and brand constraints. From that perspective, the more useful question is not whether a model is powerful, but whether its strengths are legible enough for users to make good decisions quickly.

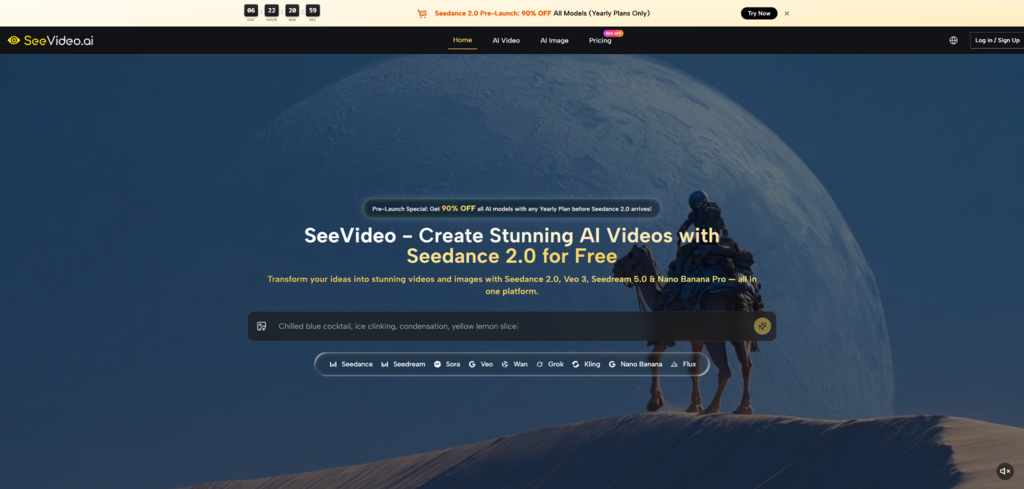

SeeVideo presents its video workflow around this principle. Seedance 2.0 appears as the core engine for multi-scene generation and audio-guided creation, while other models are positioned with clearer specializations. Veo 3 is associated with photorealistic video and native audio. Sora 2 is framed around cinematic storytelling. Wan 2.5 is associated more with artistic styling. In practical terms, that means the platform is not asking users to believe in one universal model. It is asking them to think in terms of fit.

This matters more than it may seem. One of the hidden costs in AI creation is comparison fatigue. When users jump across separate tools, they are not only comparing outputs. They are also comparing interfaces, billing structures, asset libraries, export logic, and inconsistent prompt behaviors. A unified workspace reduces that overhead.

In my view, this is one of the more realistic arguments for a model-aggregation platform. It does not claim that every model suddenly becomes equal. It claims that the surrounding workflow becomes easier to manage. For creators handling many iterations, that can be just as important as raw model capability.

Another way to understand the platform is to see AI video generation as a routing problem. The prompt is not the whole task. The user also has to route the idea toward the right engine. Some concepts want multi-scene structure. Some want reference-image fidelity. Some need faster turnaround for rough drafts. Some need a more polished final look.

That is why the official emphasis on model comparison feels relevant. It reflects a shift in creative practice: making a video is no longer only about writing a better prompt. It is also about selecting a better path.

Seedance 2.0 AI Video describes Seedance 2.0 as its core video engine, with particular emphasis on multi-scene generation, text, image, and audio inputs, fast turnaround, and professional-quality output. That combination suggests a model designed for more than isolated single-shot experiments.

What makes this positioning interesting is not just the promise of quality, but the type of work it appears intended to support. Multi-scene generation implies that the system is trying to bridge the gap between a single AI clip and something closer to a sequence. Audio input support also points toward a broader interpretation of prompting, where movement can be guided not only by descriptive text or an image, but by sound as well.

In many AI video tools, the result is strongest when the request is small and contained. A character turns, a product rotates, a landscape shifts. The challenge begins when the idea asks for progression. That is where scene continuity and transition logic start to matter.

A visually impressive clip can still feel unusable if the motion does not carry intent from one moment to the next. Based on the official framing, Seedance 2.0 is meant to address this with multi-scene support. I would treat that not as a guarantee of narrative mastery, but as a useful signal about the type of projects it may handle better.

The platform also highlights audio input support. That is important because video direction often depends on rhythm, tone, and pacing that are difficult to fully communicate in text alone. Even when users begin with written prompts, the option to use audio as a guide suggests a more flexible approach to generation.

The official pages repeatedly connect generation speed with production usefulness. That is a sensible framing. In real creative work, quality is only one variable. If iteration is too slow, experimentation becomes expensive. If iteration is fast enough, users can test multiple directions without treating every run like a final exam.

The platform’s public flow is relatively straightforward, which is probably part of its appeal. Instead of describing a long technical setup, it presents creation as a compact sequence.

Users begin by choosing a model according to the result they want. The platform explicitly separates likely use cases: Seedance 2.0 for multi-scene and audio-guided work, Veo 3 for photorealistic output with native audio, Sora 2 for cinematic storytelling, and faster Seedance variants for quicker drafts.

The next step is choosing the generation mode. The official pages describe text-to-video and image-to-video as the main inputs, with some emphasis on audio input in the broader Seedance 2.0 positioning. That means users are not forced into one way of thinking. A concept can start from a sentence, a still image, or in some cases a sound-based creative cue.

After the input is defined, the platform generates the result and allows iteration. The official FAQ makes clear that regeneration and prompt refinement are part of the expected process. That is important because it treats re-generation as normal creative behavior, not as evidence that the first result failed.

One of the clearest platform claims is that users can compare outputs side by side across different models. This is a practical advantage. It turns the workflow from simple generation into evaluation. For many creators, that is where real time savings appear.

A useful way to read the official positioning is that the platform is trying to solve three overlapping problems at once: fragmented subscriptions, fragmented experimentation, and fragmented asset management.

The official pages mention marketing teams, filmmakers, creators, and e-commerce use cases. That range is broad, but it does make sense if the platform’s real strength is model routing rather than one narrowly defined generation style.

A team working on concept pitches, ad directions, or social content often does not need a perfect final output on the first try. It needs a credible first pass that can be reviewed, rejected, or redirected quickly. A platform that makes iteration and comparison easy fits that stage of work well.

Product content is another likely match. A still product image can become motion content through image-to-video workflows, while different models can be tested for different brand tones. In my view, this is one of the more realistic short-term uses for AI video systems because the creative objective is often clearer than in open-ended storytelling.

For short cinematic scenes, the platform’s model spread looks promising on paper. But this is also where users should stay realistic. AI can generate compelling motion and atmosphere, yet narrative precision still depends heavily on prompting, selection, and repeated revision. The platform seems designed to make that revision cycle easier, not to remove it.

The official messaging is confident, but any honest reading should leave room for limits. AI video generation remains sensitive to prompt clarity, scene complexity, and the gap between what a user imagines and what a model can infer.

Even with strong models, vague prompts usually lead to generic results. A better platform can reduce workflow friction, but it cannot eliminate the need for clear creative direction.

The official FAQ openly treats regeneration as normal. I see that as a credible choice. In real use, the first output is often a direction check rather than a finished result. Users should expect experimentation, not one-click certainty.

The platform positions each model with a distinct specialty, but users should still test rather than assume. In my experience with AI tools broadly, model identity is useful as a starting hypothesis, not as a strict rule. A supposedly cinematic model may still underperform on one prompt, while a faster model may unexpectedly produce the cleaner result.

What makes the platform interesting is not that it promises to replace every part of video production. It is that it treats AI creation as a system of choices rather than a single magic button. Seedance 2.0 is presented as the central engine because it covers a wide middle ground: multi-scene work, flexible inputs, and practical speed. Around that, the other models function like optional lenses.

That design choice feels aligned with where AI creation is heading. As more capable models appear, creators do not only need better generation. They need better orchestration. A platform that helps users choose, compare, and iterate inside one environment may ultimately be more valuable than one that only promises the strongest single demo.

For people trying to understand where AI video tools are becoming genuinely useful, that may be the most important point. The story is no longer just about whether a model can create motion from a prompt. It is about whether the surrounding workflow helps turn uncertainty into a usable creative decision.